998 Scoring Dimensions. 254 Tests. Your Hardware.

Everything AiBenchLab does, organized by category. No marketing — just what's built, what tier it requires, and what it does.

998

Scoring Dimensions

998 ways to prove an AI model can — or can't — do the job

254

Tests

Across 11 domains from reasoning to adversarial safety

10

Providers

Built-in llama.cpp, Ollama, LM Studio, LocalAI, OpenAI, Anthropic, Gemini, Grok, Groq + custom

22

Suites

Pre-built evaluation suites across 4 categories

Full Feature List

All tiers Pro+ Consultant+ Enterprise

Testing Engine

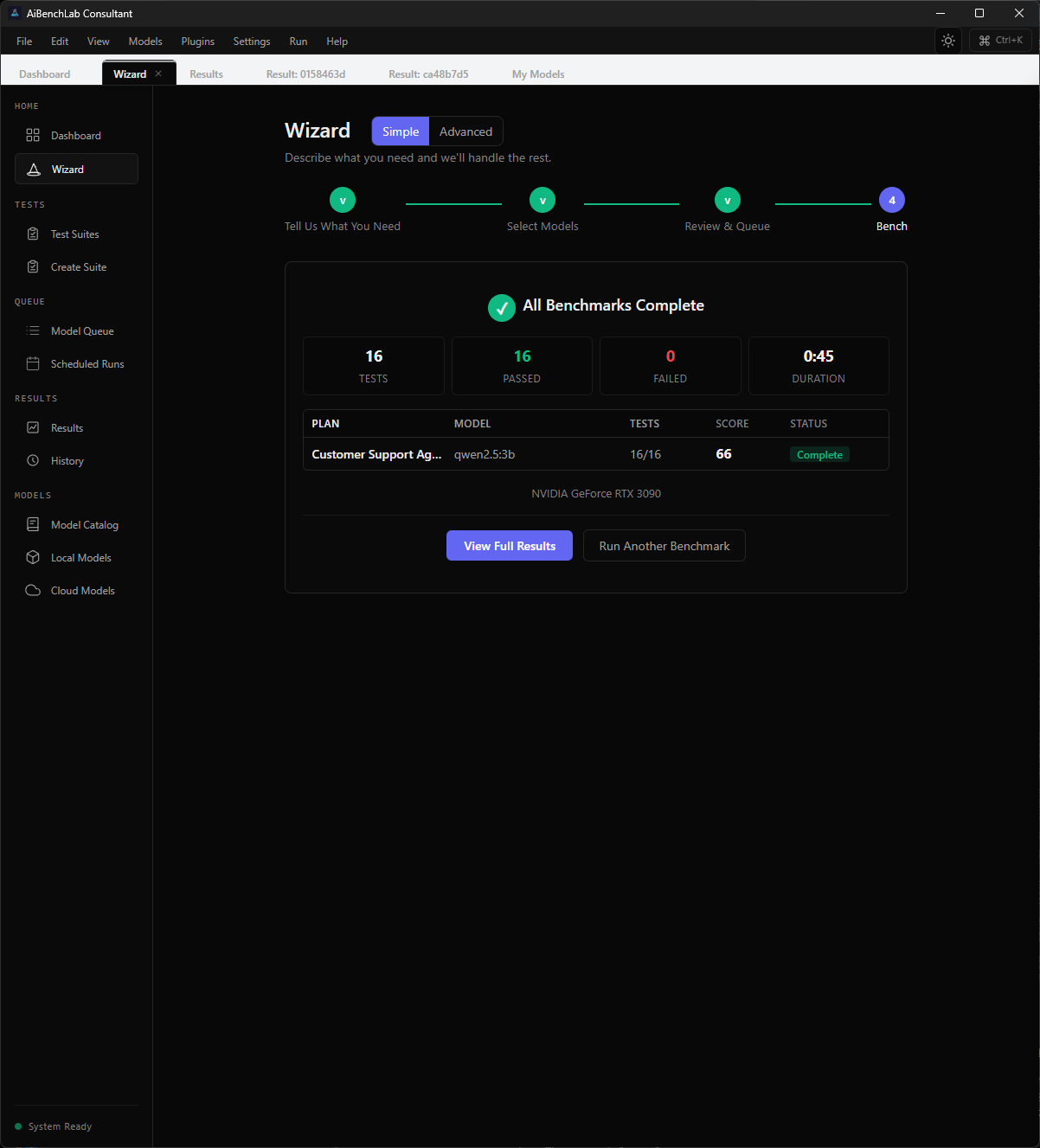

998 scoring dimensions across 254 tests and 11 domains — Easy to Edge Case difficulty, covering reasoning, coding, chat, multimodal, tool calling, agentic, safety, and more.

All 22 pre-built evaluation suites — Production, role-specific, comparison, and app-specific suites. Trial includes 2.

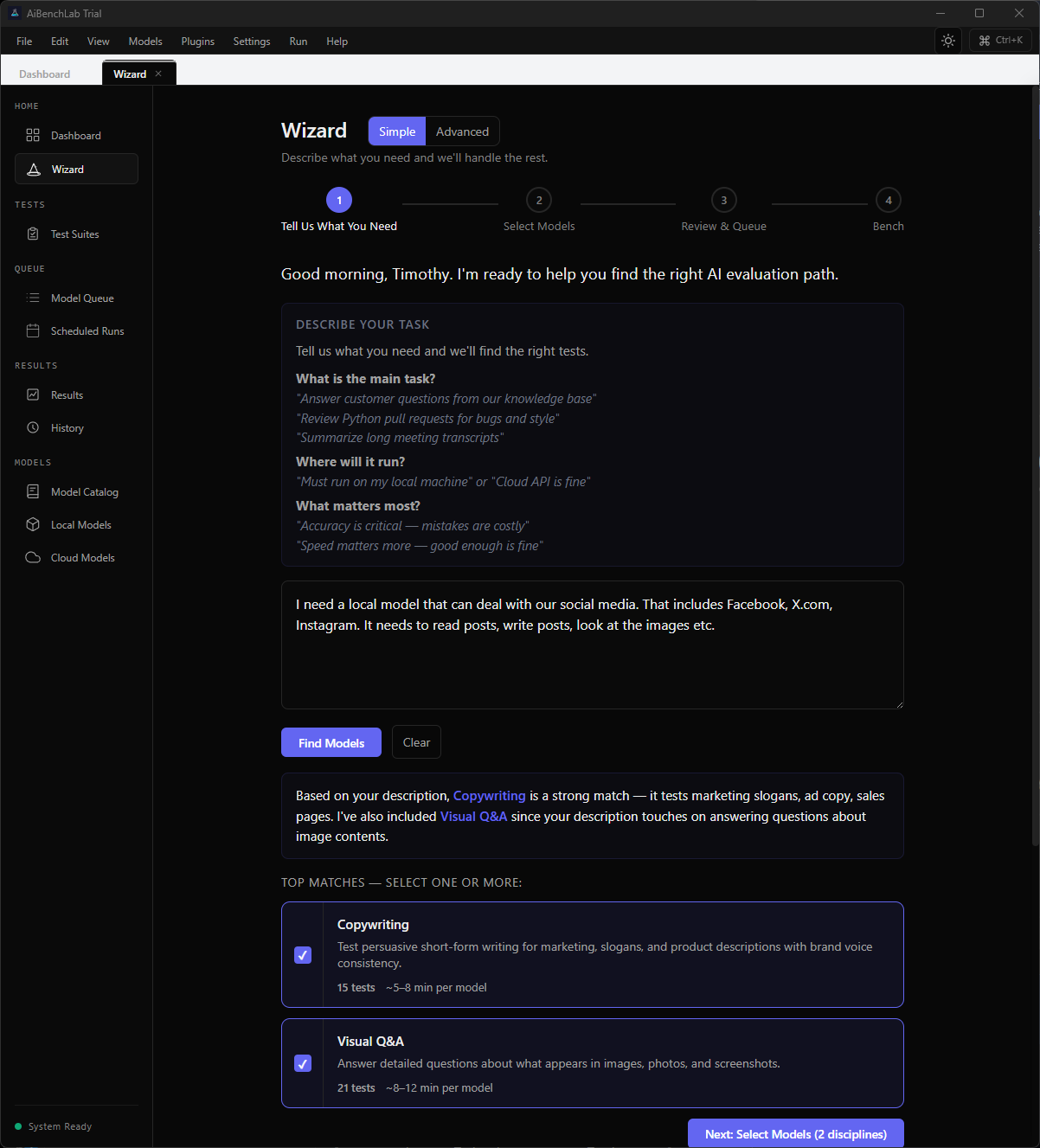

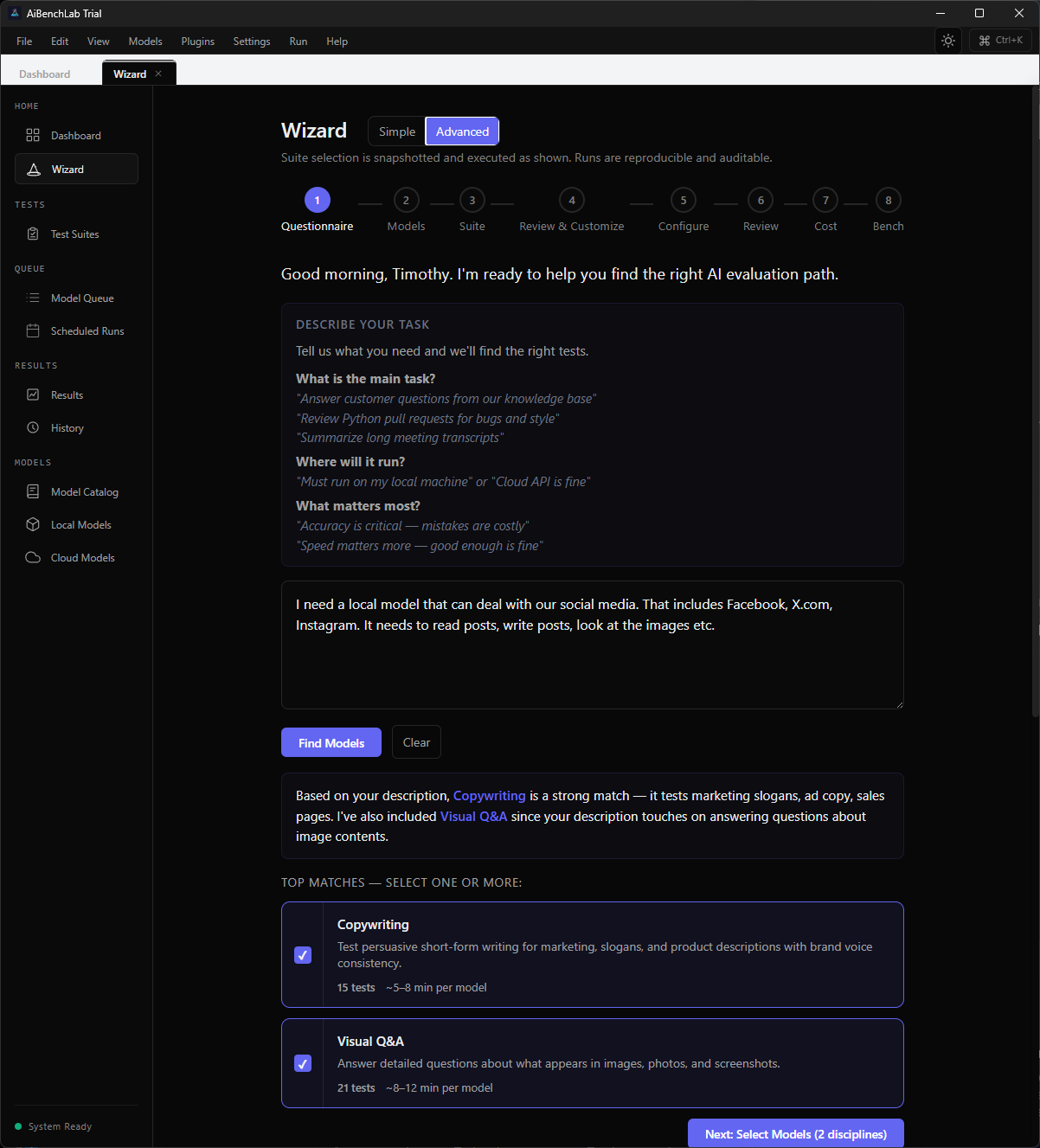

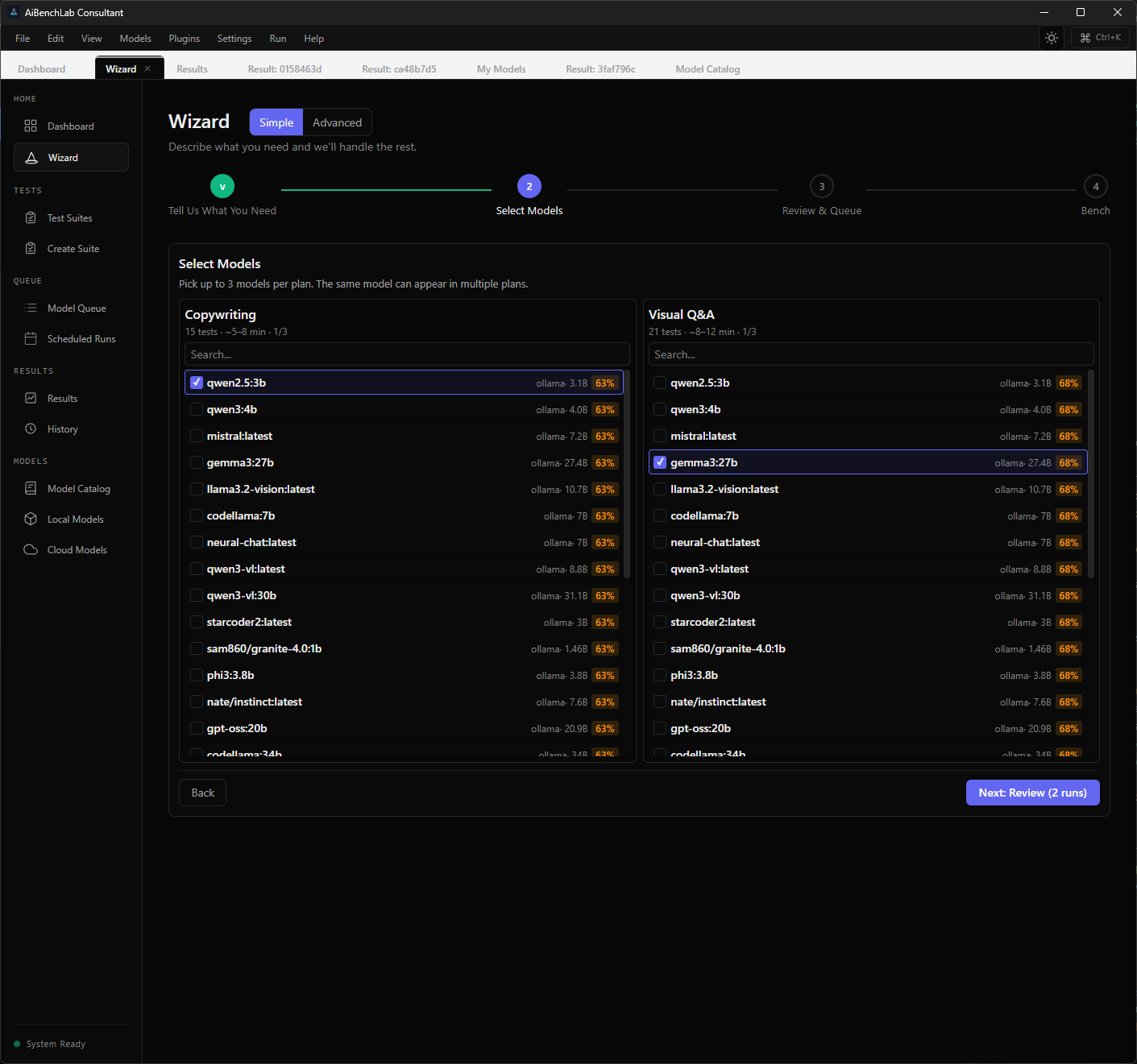

Pro+ 8-step guided wizard — AI-powered intent classification matches your task to the right evaluation disciplines automatically.

All Composite scoring — Multi-dimensional score across correctness, efficiency, safety, and clarity.

All Position-weighted scoring — Catches models that game test order by weighting position in the response.

All Hallucination penalty — Built into every text-based evaluation. Factual accuracy is not optional.

All Deterministic evaluator — Same input, same score, every time. No variance in evaluation.

All Safety-first scoring — Deployment risk and adversarial domains scored separately so critical failures surface immediately.

All Add/remove tests from suite — Customize which tests run before execution.

Pro+ Create custom suites — Build suites from scratch with domain weights and pass thresholds.

Consultant+ Save As New Suite — Save customized configurations as reusable suite templates.

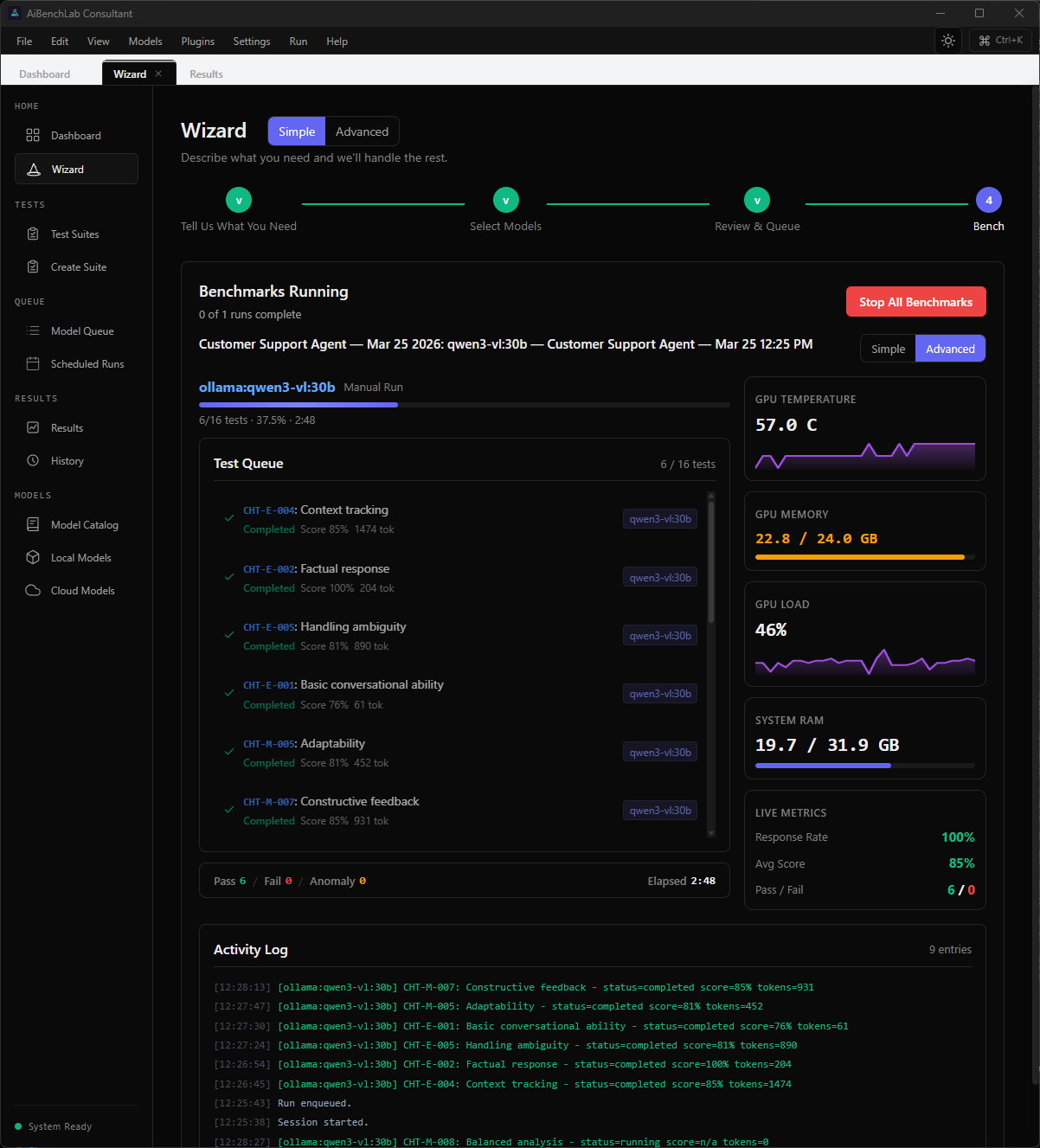

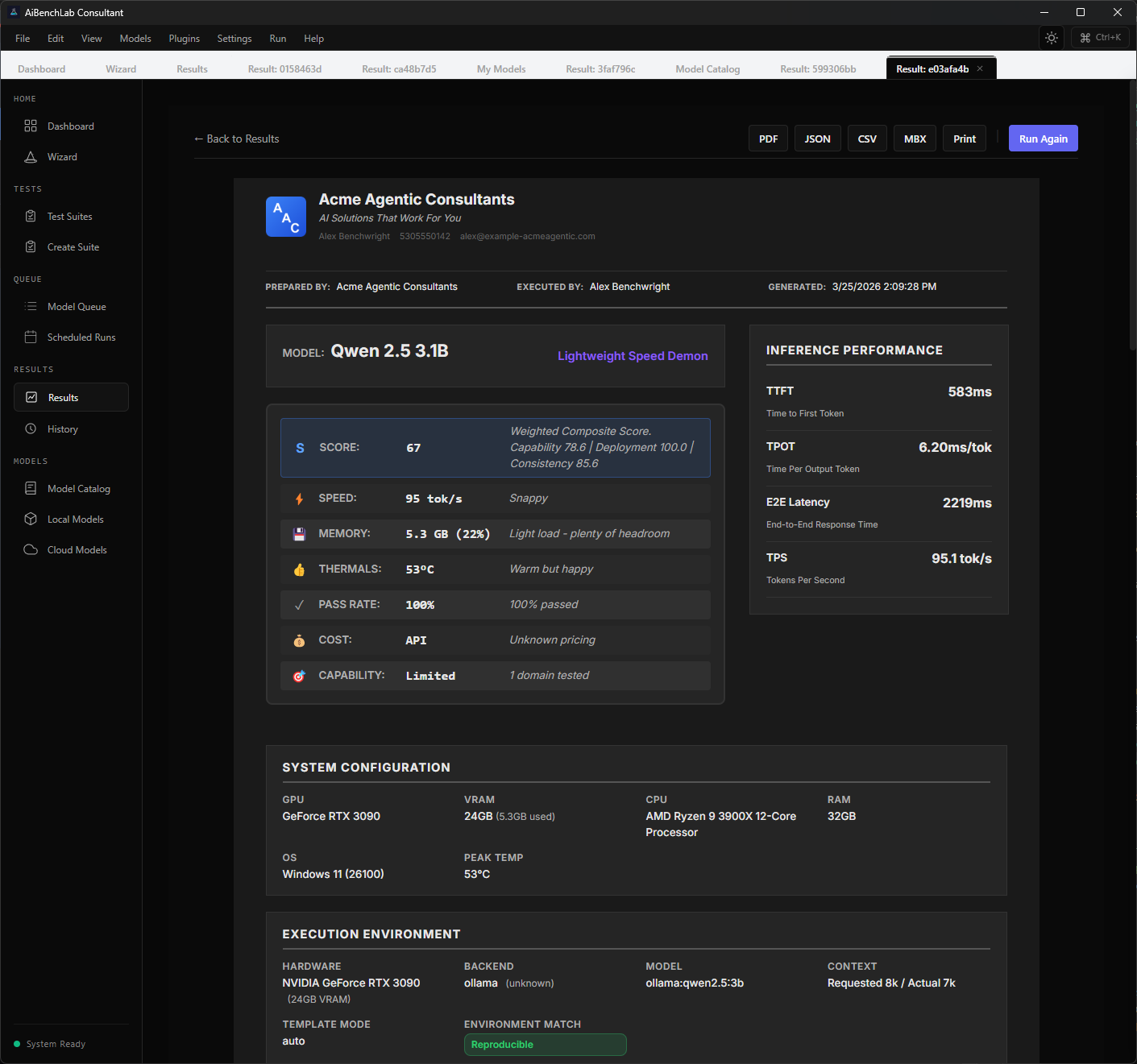

Consultant+ Performance Metrics

TTFT (Time to First Token) — How fast the model starts responding.

Pro+ TPOT (Time Per Output Token) — Per-token generation speed.

Pro+ TPS (Tokens Per Second) — Overall throughput.

Pro+ E2E Latency — Total time from prompt to complete response.

Pro+ Per-domain breakdown — Scores per domain in results view.

All Individual test results — Expandable per-test detail with scoring criteria.

All Model comparison — Side-by-side comparison of up to 15 models.

Pro+ Speed vs accuracy scatter plot — Visual tradeoff analysis across models.

Pro+ AI-generated recommendations — Pre-benchmark model recommendations based on questionnaire.

Pro+ Benchmark history — Full session history with search, filter, and pagination.

Pro+ GPU/VRAM monitoring — Live temperature, load, memory with sparkline charts.

All Thermal protection — Auto-pause between models at configurable temp threshold. Emergency abort at 95°C.

All Providers

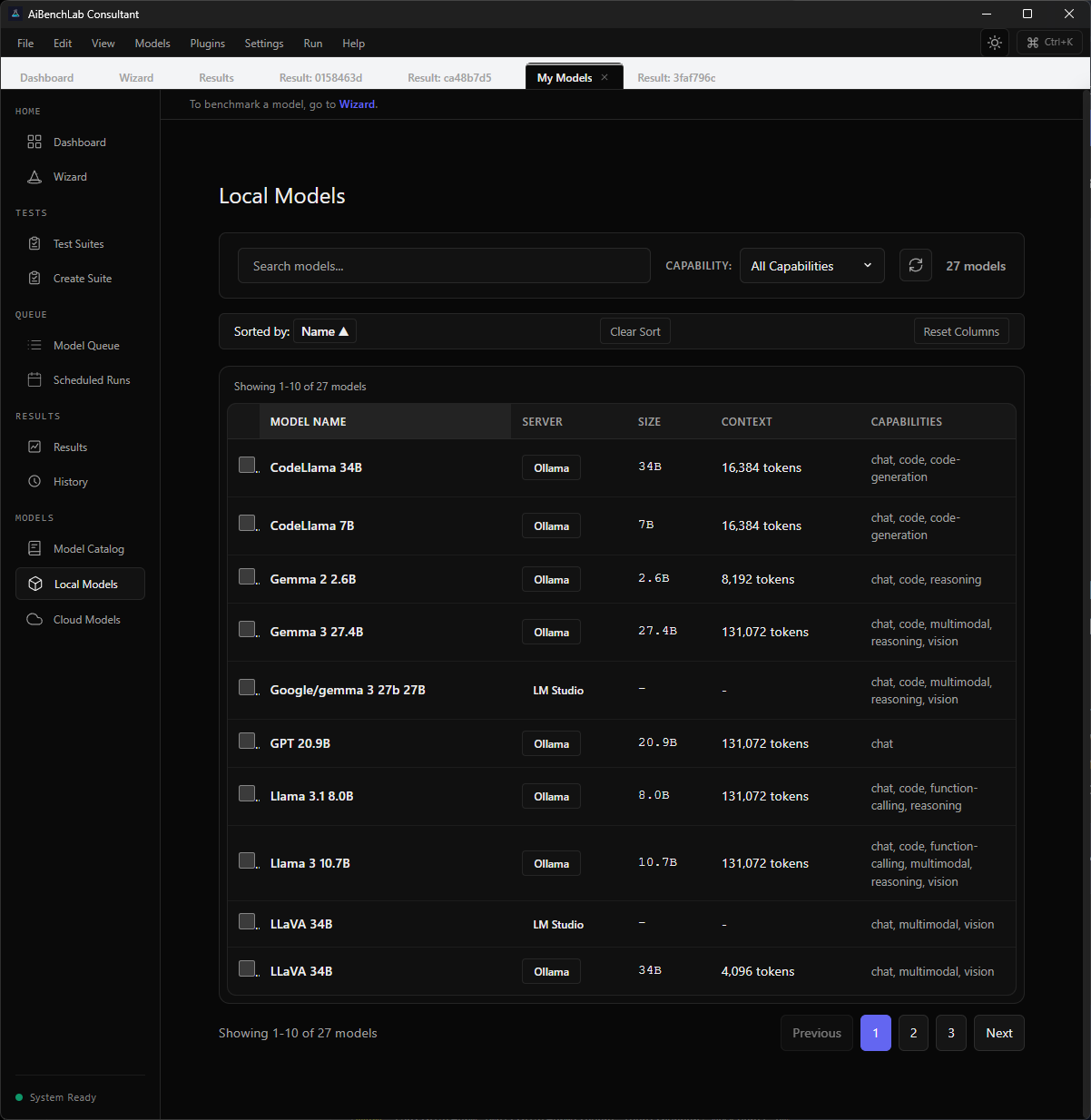

Ollama — Auto-detected at localhost:11434.

All LM Studio — OpenAI-compatible at localhost:1234.

All LocalAI — OpenAI-compatible at localhost:8080.

All Built-in llama.cpp — Managed server with bundled quick-start model.

All OpenAI — Cloud API via generic OpenAI handler.

All Anthropic — Dedicated handler for Claude models.

All Google Gemini — Dedicated handler.

All xAI (Grok) — OpenAI-compatible.

All Groq — OpenAI-compatible.

All Custom OpenAI-compatible — Any endpoint that speaks the OpenAI API format.

All Reports & Exports

PDF single-session report — Executive summary, per-domain breakdown, individual test details.

All* PDF comparison report — 10+ configurable sections, scatter plots, cost analysis.

Pro+ JSON export — Full results with timestamps and hardware metadata.

Pro+ CSV export — 23 columns including forensic metadata.

Pro+ MBX signed export — Tamper-evident with SHA-256 content hash and hardware fingerprint.

All Batch export (ZIP) — Multiple sessions in one archive. CLI/API only.

Pro+ Report section customization — 10 toggleable sections in comparison reports.

Pro+ Branding & White-Label

Company name & logo on reports — PNG/JPG/SVG, max 2MB.

Pro+ Tagline and contact info — Appears on report cover page.

Pro+ Custom report footer — Your text on every page.

Pro+ Remove AiBenchLab branding — Full white-label reports for client delivery.

Consultant+ Security & Integrity

Model fingerprinting (SHA-256) — Hardware + file fingerprinting, no PII.

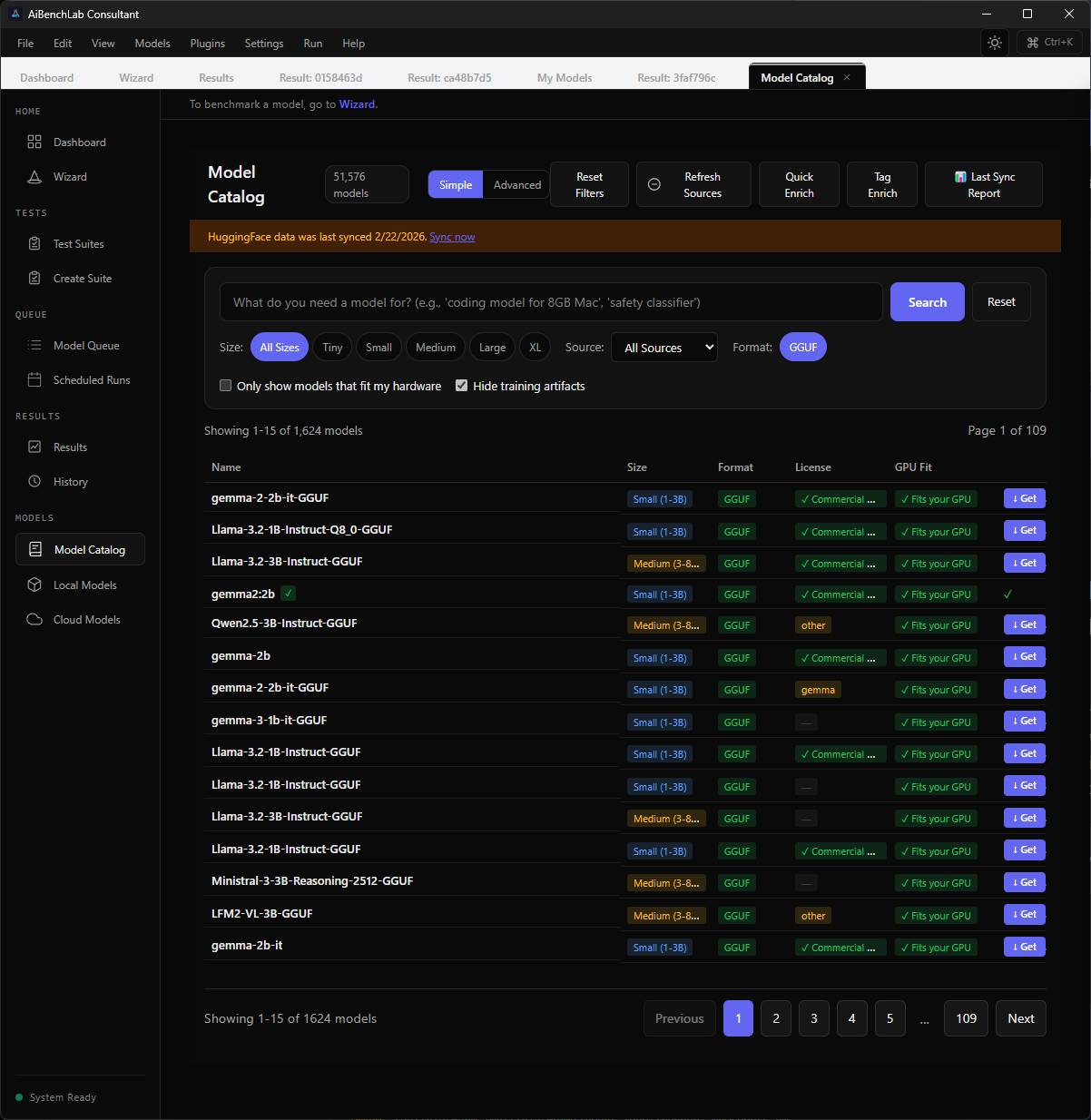

Pro+ GGUF metadata extraction — Native binary parser, 20+ metadata fields.

Pro+ Context window testing (MRCR) — Distributed Anchor Recovery for measuring effective context length.

Pro+ Plugin management — Enable/disable third-party test plugins.

Consultant+ Custom test creation — JSON/YAML definitions with judge model support and prompt templating.

Enterprise Interfaces

GUI Desktop (Tauri) — Svelte frontend + Rust backend. Full UI with wizard, results, catalog.

All CLI interface — Headless: run, export, list-models, estimate-cost.

Pro+ REST API — Axum-based with token auth. /health, /models, /benchmark, /export.

Consultant+ MCP Server — 11 tools via stdio: list_models, run_benchmark, export_report, etc.

Consultant+ Licensing & Support

License duration — 14-day trial, then lifetime for paid tiers.

All Seats — 1 (Trial/Pro), 3 (Consultant), Site license per location (Enterprise).

All 12 months of updates included — 12 months of updates included with every license purchase.

Pro+ Annual update subscription — Optional renewal for continued updates — version upgrades, new suites, new providers, security patches.

Pro+ Priority email support — Priority email support.

Pro Priority + direct support — Priority queue with direct access.

Consultant Dedicated support — Named support contact.

Enterprise Founding 100 direct channel — Direct access to the developer for first 100 customers.

Pro+ * Trial PDF reports are watermarked with redacted test details.

Screenshots

Don't pay for another AI model until you know it can do the job.

Join the waitlist to be first in line when the free trial launches.